Black Jade

In the darkest of ages, the Lord of the Lightstone is a lowly man, and lost…

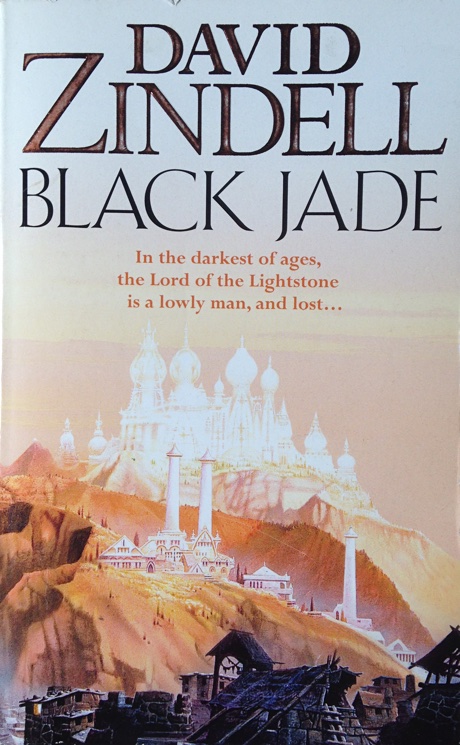

In the afternoon my mother and I visited a local bookstore that had a cancellation sale; all books were priced at 1 euro each. I couldn't find anything, but my mother found Black Jade by David Zindell.

I had never heard of this author, but decided to buy the book anyway.